Recently I was approached about accessing atom feeds services from the Dutch national SDI (PDOK).

After writing several

posts on the subject I realized that having access to all of the atom feeds services in FME, would be a nice challenge and a useful addition.

So I set about figuring out what would be the best way to set it up. I realized that I wanted to have the option to select an individual service or several of them.

How to get all the URL's

Normally the GEORSS reader is used to access a XML feed.

To be able to access a service you would have to select the URL from the web page and paste it into the reader.

This approach works fine, if you access a couple of services, but I would like to have them all selected.

Especially when new services are added or deleted.

Once I have all of them I can choose the ones I would like to use in my workspace.

Well since FME is great at accessing data on the web, Why not use it to retrieve the URL's of all the services?

The PDOK site is built with a certain logic, all of the services are alphabetically ordered.

Using that logic in FME enables you to select the services by accessing the HTML pages displaying the services on the PDOK website.

|

| PDOK web page HTML |

The XMLFragmenter can slice through the web page HTML like any other XML document.

Parsing the XML (another great feature of FME) enables the final selection of the URL's.

|

| FME workspace |

Result

So after some XML parsing I got a list of all of the current PDOK atom feed services.

You might think, well I can just sit and copy paste the URL's into a text file.....well sure you can! nobody is stopping you :)

But by using FME and it's XML capabilities, you always end up with an updated list.

When services are deleted or new ones introduced, the resulting list will reflect that.

Beside that, once you have the services list available, you can continue your data transformation, something which is not really possible with a text file :)

|

| Atom feeds services list. |

Atom feeds in FME

Atom feeds in FME

As with anything FME, there are more ways to skin the proverbial cat ;)

One way of using the services list in a workspace, can be by using a startup

python script.

Yet another way would be to wrap the workspace into a custom reader, so that it can become part of your regular FME readers.

And finally you can just opt to continue developing the workspace.

There are quite a lot of FME transformers dedicated to web services, two of them

are specific for GEORSS feeds.

In this scenario the

GeoRSSFeatureReplacer is very useful to extract all the information from

the server response into attributes.

Services selection

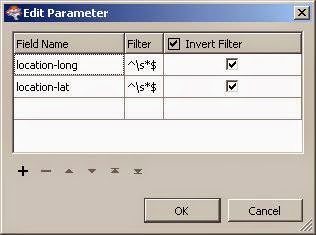

The services selection should be done at run time to provide extra flexibility.

This is where a TestFilter and

user parameter combo comes in handy.

To retrieve the parameter value(s) into my workspace, I use the so adequately named :) >

ParameterFetcher

Then it's a simple matter of setting up the correct test to retrieve the selected services.

So to wrap it up:

- Now I have a new PDOK atom feeds reader in FME, and I know for sure that it will always provide me with an updated list of services.

- As you know FME is a no code approach.

- With just 8 transformers the workspace is super simple.

Life made easy the FME way, interested? give me a

call.